No Results Match Your Search

Please try again or contact marketing@threatconnect.com for more information on our resources.

Mending the Broken Cyber Defense Chain

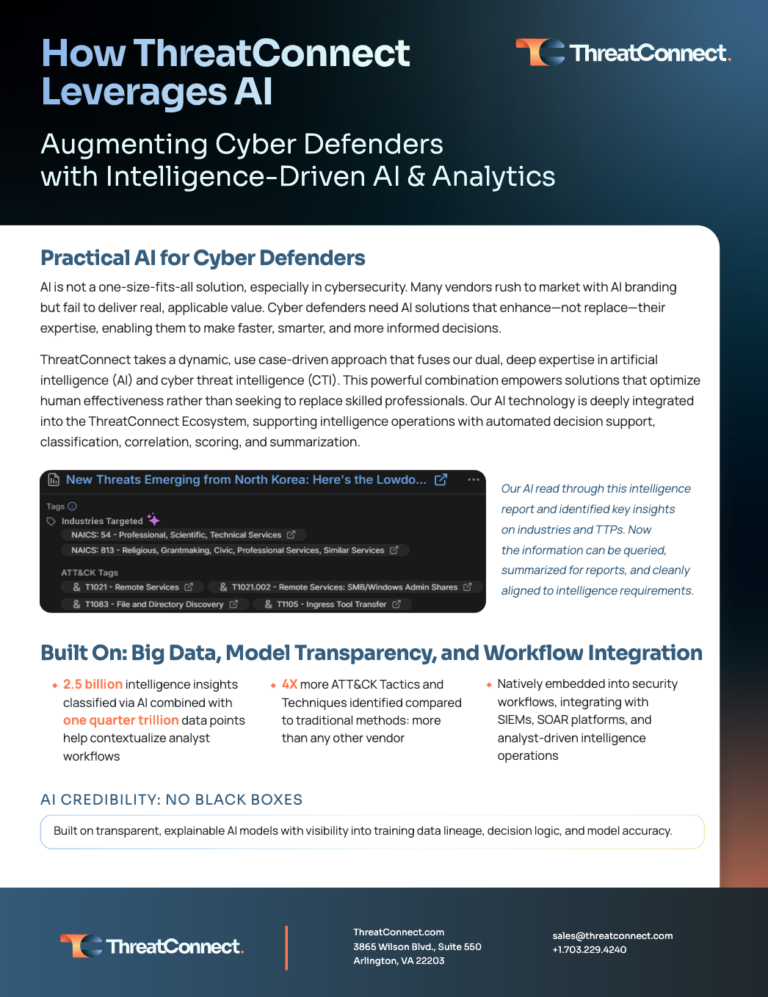

Cyber failures aren’t caused by a lack of alerts—they’re caused by the broken connections between signal, risk, and action. Learn how to bridge the gap with an agentic defense system. Modern cyber defense is failing because security teams are working harder at the wrong moments. Organizations have more data than ever, yet they still struggle […]

How a Big Four Firm Set the Standard for Unified Security Operations

For one of the world’s largest professional services firms, their threat intelligence program was becoming a high-stakes game of whack-a-mole. With over 470,000 employees, the firm was a prime target, but their security tools were working against them. Their elite analysts were hampered by: Operational Inefficiency: Manually pivoting between disconnected tools. Intelligence Fatigue: A flood […]

Utilities & Energy Enterprise Cuts False Positives by Over 75%

For critical infrastructure leaders, the noise of modern threat intelligence isn’t just annoying—it’s a liability. A major U.S. utilities and energy enterprise with over 24,000 employees faced this reality daily, struggling with fragmented tooling and excessive signal noise that left their security teams chasing ghosts instead of stopping threats. Their analysts were bogged down by […]

From Pockets of Intel to Enterprise-Wide Defense: Modernizing Threat Intel in Financial Services

Large financial institutions often face fragmented threat intelligence, which can cause data overload, slow down incident response, and reduce visibility into cyber threats. When security operations are siloed, teams spend too much time connecting disparate data points instead of making critical decisions. This was the exact challenge for one global financial enterprise with over 200,000 […]

Stop Guessing, Start Quantifying: How a CPG Giant Solved the Cyber Risk Puzzle

A leading global consumer goods company recently partnered with ThreatConnect to revolutionize its cyber risk management. By implementing ThreatConnect’s Risk Quantifier (RQ), the organization moved away from fragmented assessments toward a unified, data-driven cybersecurity strategy. Key outcomes of this partnership included: Executive & Board Clarity: The company now quantifies cyber threats in financial terms, making […]

How UCRI is Changing the Cyber Landscape: 2025 Survey Results

Stop Collecting Intelligence. Start Changing Outcomes. Security teams aren’t failing due to a lack of data—they’re drowning in it. Feeds for feeds, endless alerts, and dashboards ignored for days. The problem isn’t volume; it’s utility. When a zero-day hits or an exec asks, “Are we exposed?”, it shouldn’t take hours of searching through multiple tools. […]

How Healthcare Giants Operationalize Intel at Scale

Collecting threat intelligence is not enough—you need to act on it. One of the world’s largest healthcare services and technology enterprises faced a familiar problem: fragmented data, manual workflows, and too many false positives slowing incident response. Before ThreatConnect, their team depended on open-source tools and scattered feeds, all managed by a single analyst. This […]

Bridging the Gap Between Business and Cyber Risk Management

In today’s fast-changing digital world, understanding how business risk and cyber risk connect is key to staying ahead. For CISOs and cyber risk professionals, it’s more important than ever to know how to communicate those risks effectively to business leaders. That’s why we’re excited to share our exclusive webinar, where top industry experts break down […]

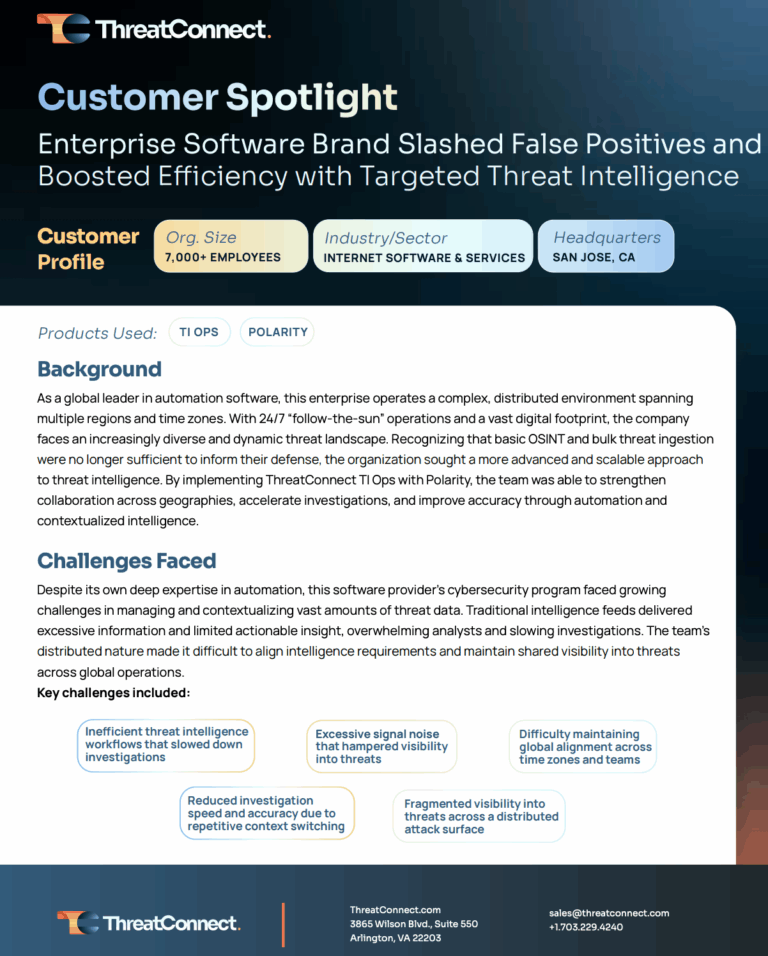

Targeted Threat Intel: Unifying Global Security Operations for an Enterprise Software Leader

A leading internet software company with over 7,000 employees faced challenges in its cybersecurity operations, including inefficient workflows, fragmented visibility, and excessive signal noise. By implementing ThreatConnect TI Ops and Polarity, they automated threat remediation, streamlined workflows, and improved collaboration across global teams. These solutions led to a 50–75% reduction in false positives, faster investigations, and enhanced overall cybersecurity.

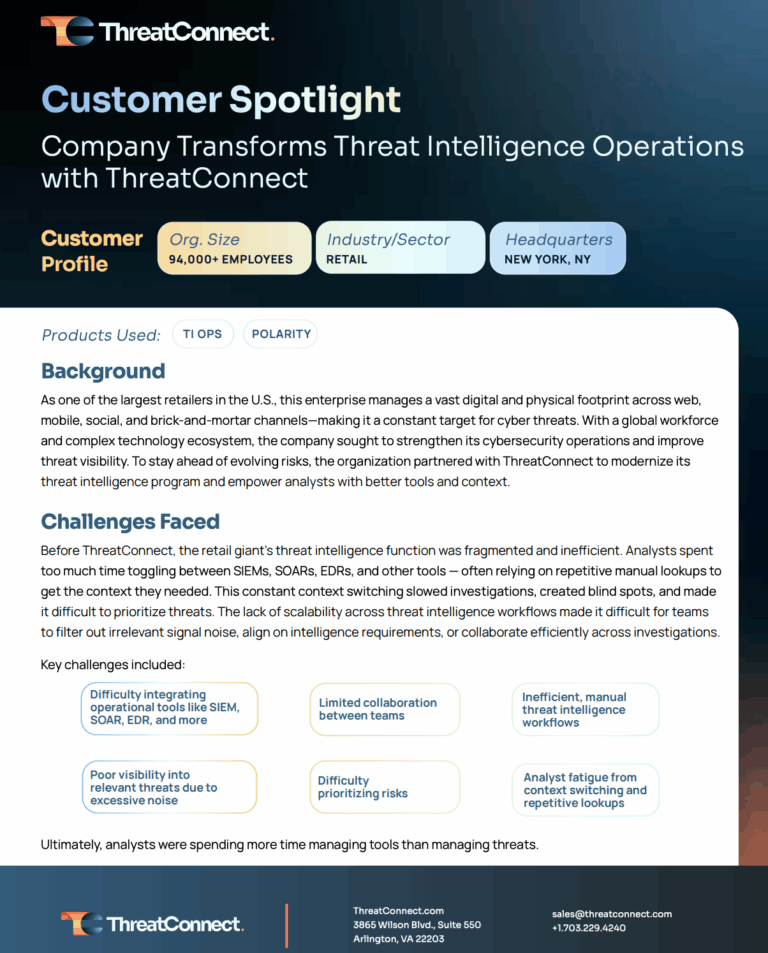

Beyond the POS: How a Leading Retailer Secured Its Enterprise with ThreatConnect

A major U.S. retailer struggled with fragmented threat intelligence, manual processes, and poor collaboration, leading to analyst fatigue and missed risks. By implementing ThreatConnect, they streamlined workflows, reduced false positives by 25%, and achieved faster, more accurate investigations. ThreatConnect is now crucial to their cybersecurity strategy.

Prioritizing the 1% How to Focus on the Vulnerabilities That Actually Get Exploited

In today’s rapidly evolving cyber landscape, effective vulnerability management remains a daunting challenge for organizations. At the forefront of addressing this issue is the concept of prioritizing the 1% of exploits that pose the most significant risks. In this video, Mike Summers from ThreatConnect delved into this topic, offering critical insights into better managing vulnerabilities […]

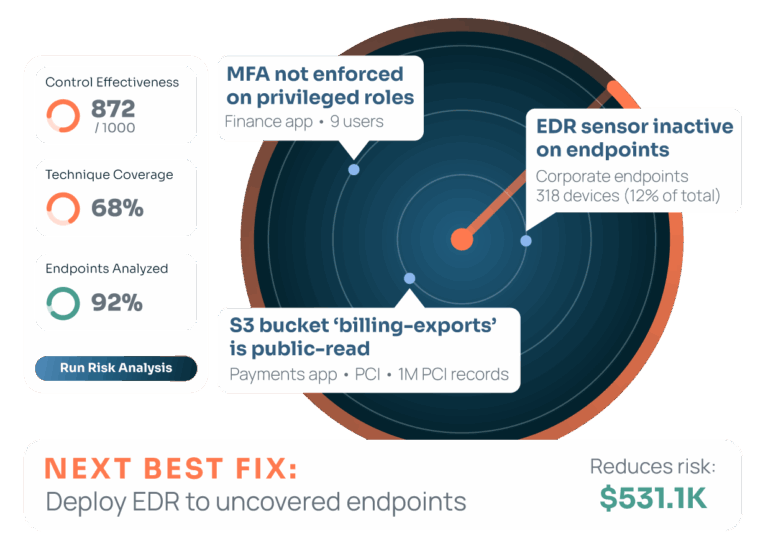

Discover RQ 9.0: Continuous Control Monitoring

Discover how ThreatConnect RQ 9.0 delivers real-time cyber risk insights by connecting vulnerabilities, attacker behaviors, and control effectiveness to financial exposure. See how RQ 9.0 empowers CISOs, security teams, and risk leaders to prioritize threats, justify decisions, and defend every action with evidence.